What are the different types of Content Moderation

Content moderation is an essential aspect of maintaining a safe and healthy online environment. It is the practice of monitoring and regulating content posted on online platforms like social media, websites, forums, and blogs to ensure that it adheres to the platform's policies and community guidelines. Content moderation is crucial in preventing the spread of inappropriate or harmful content and maintaining a positive user experience.

In this blog post, we will explore the different types of content moderation methods that are commonly used across the internet. We will discuss their advantages and disadvantages, as well as the contexts in which they are most effective.

Pre-moderation:

Pre-moderation involves reviewing content before it becomes publicly visible on a platform. Moderators or automated systems check submitted content to ensure it complies with the platform's guidelines and policies. Only approved content is published, while anything that violates the rules is rejected.

Advantages:

Ensures a high level of control over content Maintains a consistent quality of published content Reduces the risk of users being exposed to harmful or offensive material

Disadvantages:

Slows down content publishing, which may frustrate users Requires a significant amount of resources to moderate content efficiently

Post-moderation:

Post-moderation allows content to be published immediately but is reviewed after it goes live. Moderators or automated systems monitor published content and remove anything that violates the platform's policies. Users may also report inappropriate content to be reviewed and removed.

Advantages:

- Allows for faster content publishing

- Encourages user interaction and engagement

- Relies on community involvement to report inappropriate content

Disadvantages:

- Increases the risk of users encountering harmful or offensive content

- Can be challenging to manage large volumes of content

Reactive moderation:

Reactive moderation relies on user reports to identify and remove inappropriate content. Platforms using this method encourage users to flag content that violates guidelines, and the flagged content is then reviewed by moderators or automated systems.

Advantages:

- Reduces the workload for moderators

- Empowers users to contribute to a safe online environment

Disadvantages:

- Inappropriate content may remain visible until reported

- Users may misuse the reporting system for personal reasons

Distributed moderation:

Distributed moderation involves users in the moderation process, allowing them to vote on content to determine its appropriateness. Platforms that use this method often have a reputation or points system that rewards users for their moderation efforts.

Advantages:

- Engages the community in content moderation

- Reduces the need for dedicated moderators or automated systems

Disadvantages:

- May lead to mob mentality and biased moderation

- Inappropriate content may still be visible until it is voted down

Automated moderation:

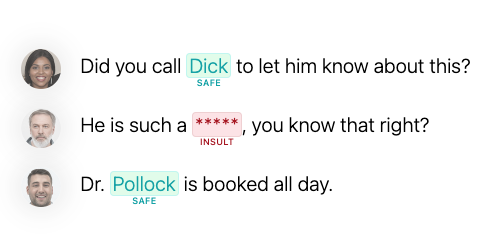

Automated moderation uses artificial intelligence (AI) and machine learning algorithms to review content. These systems can detect and remove content that violates guidelines, based on predefined criteria or patterns. Automated moderation can be used in conjunction with human moderators to improve efficiency.

Advantages:

- Efficiently handles large volumes of content

- Can be used 24/7 without the need for human intervention

Disadvantages:

- May generate false positives or negatives

- Cannot detect context or nuanced situations as effectively as human moderators

Conclusion:

Content moderation is a crucial aspect of maintaining a safe and positive online environment. There is no one-size-fits-all solution, as each moderation method has its advantages and disadvantages. The most effective content moderation strategy will depend on the platform, its user base, and its specific needs. By understanding the different types of content moderation methods available, platform owners can make informed decisions about the best approach to keep their online communities safe and thriving.

Read more

Joining the Online Dating & Discovery Association: Towards Safer Connections

This is a step to further enhance end-user safety in the online dating realm.

Toxic keyword lists and filters in 2024, the definitive guide

This is a complete guide to keyword filtering to text moderation through keyword filtering and keyword lists in 2024. Learn about best practices, trends and use-cases like profanity, toxicity filtering, obfuscation, languages and more.