UK Online Safety Bill and Content Moderation

The UK Online Safety Bill aims at protecting children and adults online in the UK. As the proposed bill is currently progressing through the legislative process, this guide will help you understand what is at stake and how content moderation can help.

What is the Online Safety Bill?

The Online Safety Bill is a proposal of a new set of regulations applying in the UK with the main goal of protecting adults and children online and making platforms more accountable about how they ensure the safety of their users. Ofcom, the UK's communications regulator, has been designated as the body responsible for the enforcement of the new regulations.

The law is currently going through the UK parliament and is expected to be adopted next fall (2023).

Who is in scope?

The Online Safety Bill will apply to any business hosting user-generated content (UGC), i.e. services such as:

-

Any user-to-user service having a significant number of UK users, i.e. any service allowing to encounter user-generated content (UGC) as well as content uploaded or shared by other users (social media platforms, chats and online messaging platforms, gaming platforms, etc.)

-

Search engines

-

Services hosting pornographic content

How to prepare for the Online Safety Bill?

The Online Safety Bill may impact your business and operations.

In any case, you should be ready to take some actions to ensure compliance. Here are some key steps to take:

-

Illegal content moderation:

-

Provide clear rules and policies to counter the risks of illegal and legal but harmful content on the platform

-

Use a content moderation system to quickly remove illegal content such as terrorist content, Child Sexual Abuse Material (CSAM), harassment, drug / weapon dealing, fraud, etc., as well as self-harm promotion

-

Prevent this kind of content from appearing in the first place

-

-

User protection, especially for minors:

-

Enhance age-checking features

-

Make sure minors do not access any harmful but legal content such as pornographic content

-

Report Child Sexual Exploitation (CSE) content to the National Crime Agency

-

-

Transparency:

-

Inform your users on how content moderation works and impacts their experience on the platform

-

Be as transparent as possible about the possible risks posed to users and especially minors

-

Provide a clear way to do reports

-

Give users the ability to choose and monitor what they see

-

Keep in mind that the Online Safety Bill may still evolve and that you should stay informed and up to date with its requirements.

What are the sanctions in case of non-compliance?

If companies do not comply with the Online Safety Bill, consequences such as fines or criminal sanctions may result.

Depending on the type of digital service, the fine imposed may range up to 10% of its annual worldwide turnover or to 18M pounds.

Tech company executives could also be liable for prosecution if failing to protect children online.

What about Content Moderation under the Online Safety Bill?

Compliance, accountability and transparency are the three concepts to keep in mind. Content moderation is essential to create and maintain a safe and healthy environment for users, and it becomes even more crucial under the Online Safety Bill. It is a service that can:

-

Help platforms comply with the regulations by quickly and proactively detect illegal and harmful but legal content to ensure the safety of both adults and minors

-

Make sure minors are protected by only accessing what they are allowed to

-

Provide guidance to platforms on how identified illegal UGC as well as harmful UGC should be treated under the law

-

Improve transparency and accountability by providing more visibility on the type of UGC found on the platform and how it is monitored

Read more

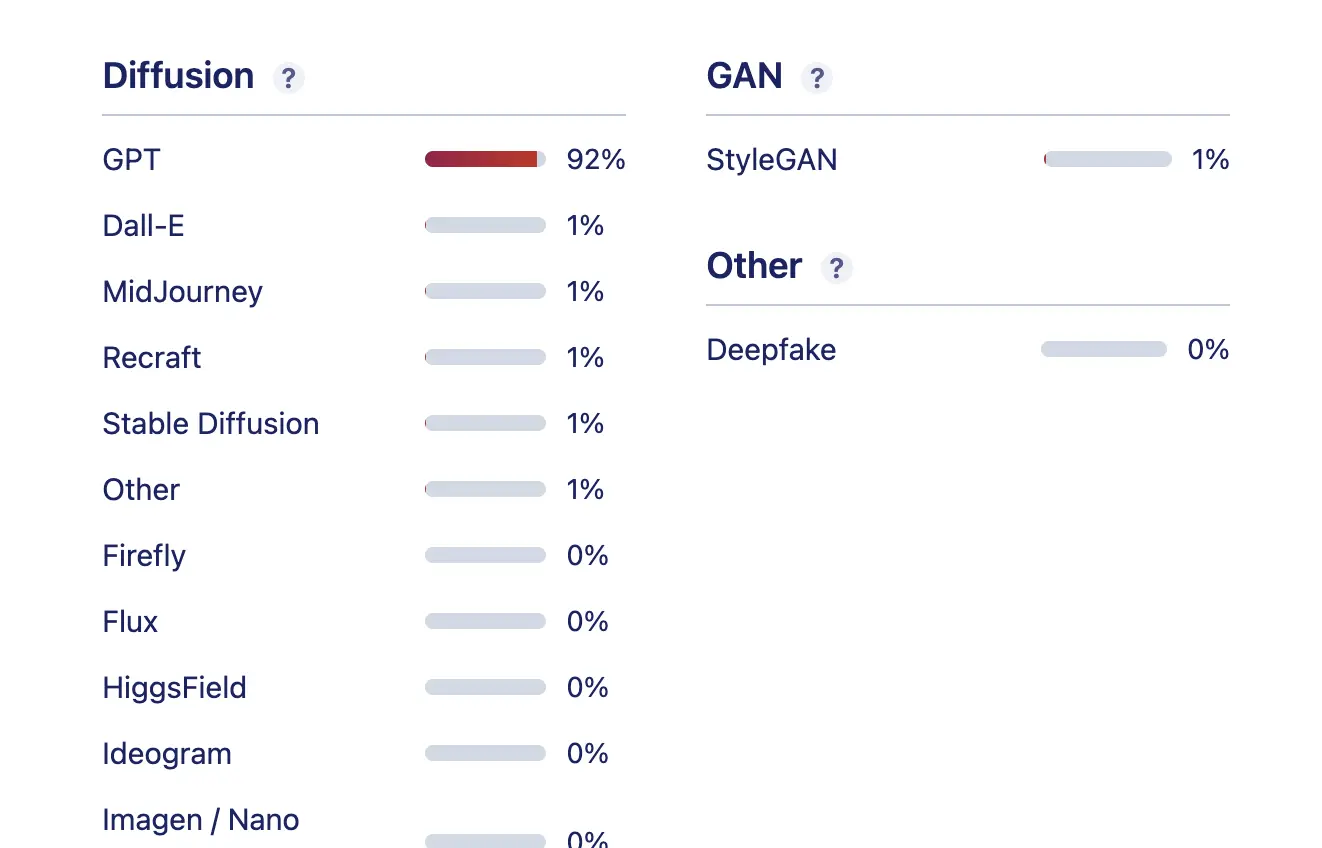

Beyond Binary: Why Per-Generator AI Detection Matters

Learn why per-generator confidence scores across 20+ AI generators give you the granularity that binary AI detection can't.

Joining the Online Dating & Discovery Association: Towards Safer Connections

This is a step to further enhance end-user safety in the online dating realm.